Exploring the Impact of Few-Shot Prompts on GPT-3 Performance: Insights from Adam Shimi’s Experiment

In the rapidly evolving world of AI and natural language processing, the quest for optimizing model performance continues to intrigue researchers and enthusiasts alike. Adam Shimi recently proposed an engaging experiment centered around the use of few-shot prompts on GPT-3. His goal was to observe whether larger models could handle a broader range of prompting styles effectively. This exploration not only provided valuable insights into the behavior of different models but also raised intriguing questions about their performance variability.

The Experiment Framework: A Dive into Few-Shot Prompting

Shimi’s experiment involved testing various few-shot prompts on the Stanford Sentiment Treebank (SST), a well-known dataset for sentiment analysis. He meticulously crafted a series of prompts aimed at evaluating how different sized models—ranging from the smaller GPT-2 variants to the more robust GPT-3 configurations—responded to these stimuli. The expectation was that as model size increased, so too would the ability to interpret and respond accurately to a range of prompts.

Mixed Results: Performance Insights

The results from Shimi’s experiment were decidedly mixed. While larger models like GPT-3 demonstrated some promise, the performance of GPT-2 models was notably poor, rendering their outputs largely ineffective. Surprisingly, the performance was not consistently correlated with model size. For instance, the 1.3 billion parameter model outperformed the 2.7 billion parameter version, and the "babbage" model surpassed "curie" in accuracy. This unexpected trend raised questions about the relationship between model size and prompt response effectiveness.

The following table summarizes the mean accuracy and standard deviation in accuracy across various models tested during the experiment:

| Model | Mean Accuracy | Standard Deviation in Accuracy |

|---|---|---|

| gpt3-ada | 51.9 | 0.0368 |

| gpt3-babbage | 69.4 | 0.0840 |

| gpt3-curie | 67.4 | 0.0807 |

| neo-1.3B | 63.0 | 0.0522 |

| neo-2.7B | 56.5 | 0.0684 |

Variability and Correlation: Unanticipated Findings

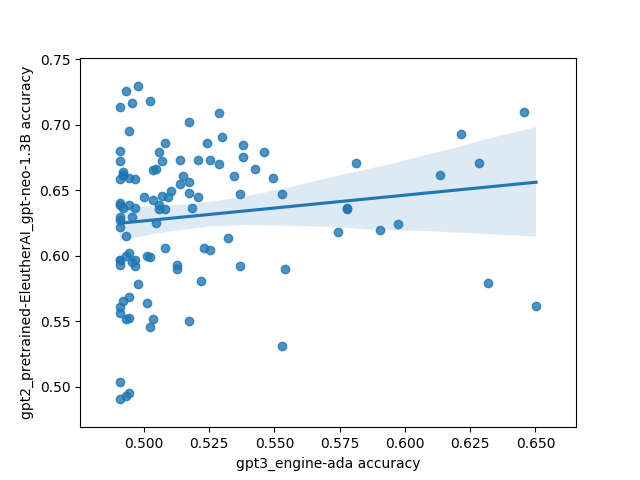

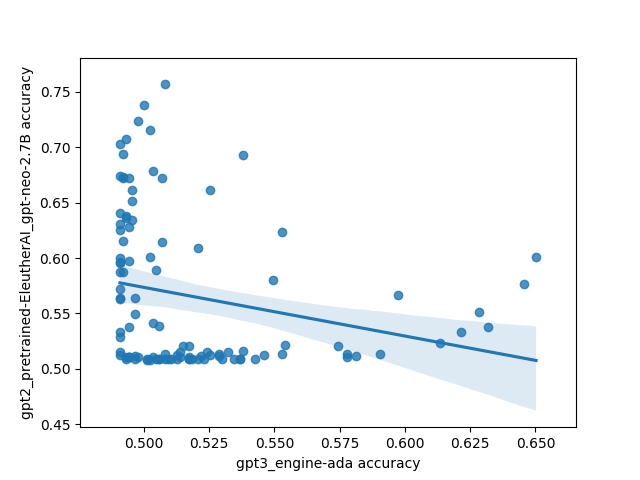

One of the most striking findings from Shimi’s research was the lack of correlation between model performances concerning the prompts used. This was particularly surprising, as one would typically expect models trained on similar datasets to exhibit comparable preferences for certain types of prompts. The inconsistency highlighted a complex interplay between model architecture, training data, and the nature of the prompts themselves.

The visuals accompanying the experiment demonstrate this variability vividly. Each plot reveals a myriad of points representing prompts, with axes indicating performance across different models. The absence of a clear correlation suggests that the effectiveness of prompts may depend on nuanced factors that are not solely determined by model size or training data.

Visualizing the Results: The Importance of Plots

The graphical representations of the experiment’s outcomes serve as powerful tools for understanding the complexities involved. Each plot illustrates the SST accuracy across various models, providing a clear visual reference for how different prompts perform. As you analyze these plots, it’s evident that while some prompts yield higher accuracy for certain models, they may perform poorly for others. This insight is crucial for developers and researchers seeking to refine their approach to prompt engineering.

The Code Behind the Experiment

For those interested in replicating or exploring Shimi’s findings further, the code used during the experiment is readily available. This transparency not only fosters collaboration within the AI community but also encourages others to delve deeper into the realm of few-shot prompting and model performance.

As the field of AI continues to advance, experiments like Shimi’s provide essential insights into the nuanced behaviors of language models. By examining the intricacies of few-shot prompting, researchers can better understand how to navigate the challenges of model training and optimization, ultimately driving the evolution of more sophisticated and effective AI systems.

Inspired by: Source