Unveiling StarCoder2: The Next Evolution in Code Language Models

In the dynamic world of artificial intelligence and machine learning, code language models have become essential tools for developers. The release of StarCoder2 by BigCode marks a significant advancement in this domain, introducing a new generation of transparently trained open code LLMs (Large Language Models). With three distinct model sizes, StarCoder2 is set to enhance the coding landscape by leveraging a vast dataset known as The Stack v2.

What is StarCoder2?

StarCoder2 is a family of open LLMs tailored for code generation and comprehension. It comes in three versions, each differing in parameter count: 3B, 7B, and 15B. The flagship model, StarCoder2-15B, is particularly impressive, having been trained on over 4 trillion tokens and accommodating 600+ programming languages sourced from The Stack v2.

One of the standout features of StarCoder2 models is their use of Grouped Query Attention, enabling a context window of 16,384 tokens with a sliding window attention of 4,096 tokens. This innovative architecture allows the models to handle extensive code contexts seamlessly, making them more efficient for developers.

Here’s a brief overview of each model in the StarCoder2 family:

- StarCoder2-3B: Trained on 3 trillion tokens across 17 programming languages.

- StarCoder2-7B: Trained on 3.5 trillion tokens, also covering 17 programming languages.

- StarCoder2-15B: The most advanced model, trained on over 4 trillion tokens and 600+ programming languages.

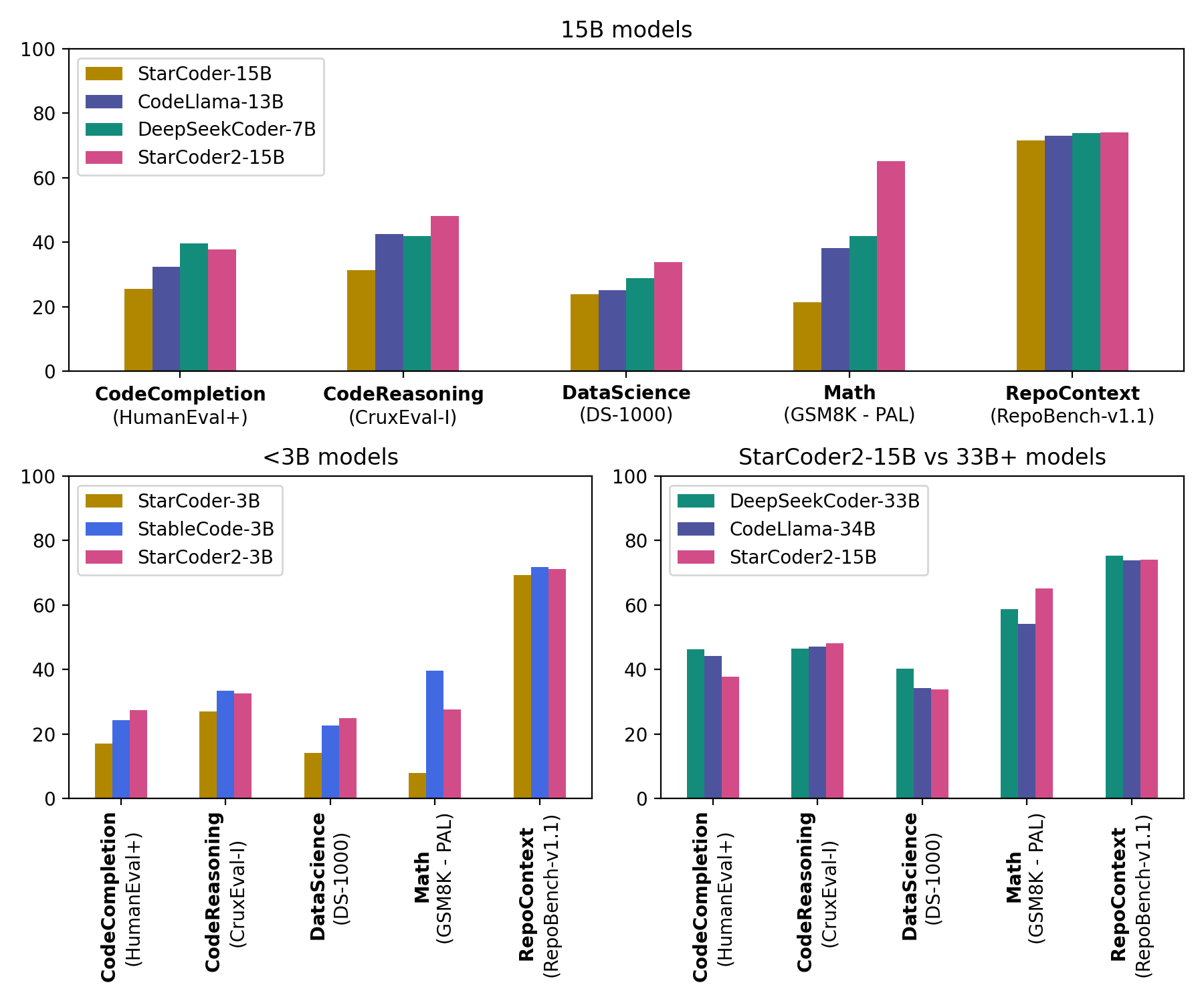

The performance metrics of StarCoder2 are remarkable—StarCoder2-15B competes with models boasting over 33 billion parameters in various evaluations, while StarCoder2-3B matches the capabilities of the previous StarCoder1-15B.

What is The Stack v2?

The heart of StarCoder2 lies in The Stack v2, a groundbreaking dataset engineered for LLM pretraining. This dataset is an extensive upgrade from its predecessor, The Stack v1, featuring enhanced language and license detection processes and improved filtering heuristics. The data is organized by repositories, allowing the models to be trained with relevant repository contexts—crucial for understanding the intricacies of coding projects.

The Stack v2 is derived from the Software Heritage archive, which is the largest public repository of software source code and its accompanying developmental history. This initiative, launched by Inria in collaboration with UNESCO, aims to preserve and share the source code of publicly accessible software. The partnership with Software Heritage ensures that StarCoder2’s training data is both comprehensive and of high quality.

Developers and researchers can access The Stack v2 through the Hugging Face Hub, making it readily available for those interested in leveraging this powerful resource.

About BigCode

BigCode is an open scientific collaboration spearheaded by Hugging Face and ServiceNow, focusing on the responsible development of large language models for code. This initiative emphasizes transparency and the ethical use of AI technologies, ensuring that advancements in code generation are beneficial and accessible to all.

The collaboration aims to foster innovation while maintaining a commitment to responsible AI practices, which is increasingly vital in today’s rapidly evolving tech landscape.

Links for Further Exploration

For those keen to dive deeper into the resources surrounding StarCoder2 and BigCode, several links provide access to models, data governance, and additional information:

- Models: Explore the various models available for use and experimentation.

- Data & Governance: Understand the frameworks and guidelines governing the use of these powerful tools.

- Others: Access a range of other resources related to the BigCode initiative.

For comprehensive details and to discover all the resources and links, visit huggingface.co/bigcode and explore the future of code generation.

StarCoder2 is not just a technological marvel; it’s a testament to the collaborative efforts in the field of AI, promising to revolutionize how developers interact with code and enhance productivity in the software development lifecycle.

Inspired by: Source